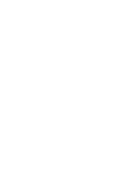

3. Humanitarian Accountability Framework (HAF)

CARE is committed to a wide range of internal and inter-agency policies and standards. Central to these standards and policies is our commitment to being directly accountable for the quality of our work by making certain that communities affected by disasters have a say in planning, implementing and judging CARE’s response. However, the large number of different standards and accountability initiatives can be confusing.

In order to simplify their application CARE’s Humanitarian Accountability Framework (HAF), first developed in 2010 and then revised in 2016:

- shows how they relate to each other, rather than providing an entirely new set of standards or benchmarks

- clarifies what CARE staff (CO staff, Lead Members, CEG, CARE members) will actually be held accountable for during emergency responses

- defines compliance systems to ensure implementation of the HAF is driven by senior management, who are expected to model appropriate behaviours to ensure genuine accountability

- is a policy statement and framework on quality and accountability for CARE’s humanitarian mandate. It sets out how accountability is a constant, guiding principle in CARE’s humanitarian work which, when applied at every stage of the programme cycle, provides an essential means of achieving high programme quality standards and greatest impact.

Therefore, the HAF has three pillars which are described in more detail in the following sections.

All CARE staff and offices should understand and comply with the HAF in the design, delivery and review of humanitarian programming. Compliance requires meaningful engagement with and the empowerment of people affected by crisis, partners and CARE staff. The HAF, as such, prescribes a culture of transparency and learning to drive real-time and long-term improvements in CARE’s responses to humanitarian crises. The HAF is also a vehicle for bringing CARE together in focus, coherence and consistency to achieve high quality, accountable humanitarian programming across the globe.

3.1 COMMITMENTS on Humanitarian Quality and Accountability (see section.)

3.2 TARGETS for CARE’s Humanitarian Performance (see section)

3.3 ACCOUNTABILITY SYSTEM for monitoring compliance with commitments and performance against targets (see section)

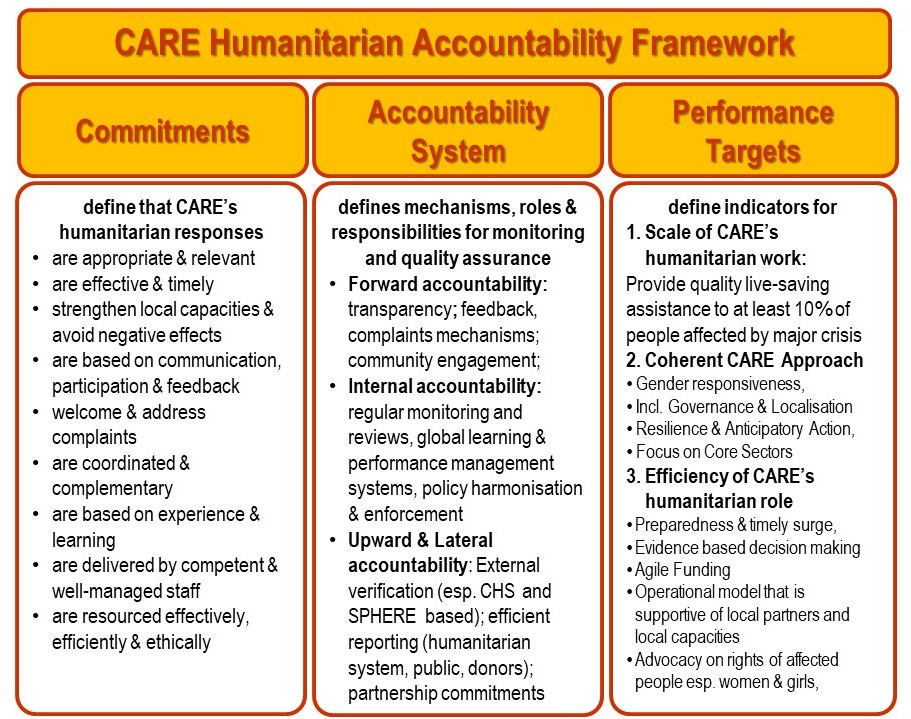

The nine Commitments on Humanitarian Quality and Accountability included in CARE’s Humanitarian and Accountability Framework (HAF) are in principle the same set out in the Core Humanitarian Standard (CHS).

They replace the Benchmarks of the 2010 HAF.

3.1.1 The Core Humanitarian Standard (CHS)

The Core Humanitarian Standard (CHS),launched in December 2014, is a set of nine Commitments to communities and people affected by crisis that encapsulate principled, accountable and good quality humanitarian action. While a voluntary code for humanitarian actors at present, the CHS aims to become a means of certification of agencies delivering humanitarian aid. CARE contributed to the development of the CHS and became a founding member of the CHS Alliance in June 2015.

The CHS replaces the 2010 HAP Standards, People in Aid Code of Good Practice and (in 2017) Sphere Project core standards, but sits alongside the Sphere Project Technical Standards and Code of Conduct for the International Red Cross and Red Crescent Movement and NGOs in Disaster Relief as an inter-agency humanitarian standard.

While the CHS is intended in part to consolidate the very many standards that have been produced to guide global humanitarian programming over recent decades, its raison d’être is to facilitate greater accountability to people affected by crisis because ‘knowing what humanitarian organisations have committed to will enable them to hold those organisations to account’. The CHS, in other words, places people affected by crisis at the centre of humanitarian action and promotes respect for their fundamental human rights.

For more general information about the CHS refer to the CHS Guidance Note and Indicators; FAQs about the CHS and Sphere; and the CHS folder in Minerva, or the FAQs about the CHS and CARE

3.1.2 CHS quality criteria and indicators

Each CHS Commitment includes its own set of Performance Indicators, Key Actions and Organisational Responsibilities against which CARE is able to test and demonstrate its compliance.

As a founding member of the CHS Alliance CARE is also committed to contribute to the constant improvement of CHS verification tools and mechanisms. Therefore CARE might decide to adapt any of the CHS quality criteria and particularly the CHS indicators when we deem it appropriate in order to reflect CARE’s specific aspirations especially with regards to gender equality and women’s voice as well as on inclusive governance. In any case, CARE will consider and respect CHS indicators as the minimum standard.

| Commitment | Quality Criterion | |

Communities and people affected by crisis… |

||

| 1 | …receive assistance appropriate and relevant to their needs | Humanitarian response is appropriate and relevant |

| 2 | …have access to the humanitarian assistance they need at the right time | Humanitarian response is effective and timely |

| 3 | …are not negatively affected and are more prepared, resilient and less at-risk as a result of humanitarian action | Humanitarian response strengthens local capacities and avoids negative effects |

| 4 | …know their rights and entitlements, have access to information and participate in decisions that affect them | Humanitarian response is based on communication, participation and feedback |

| 5 | …have access to safe and responsive mechanisms to handle complaints | Complaints are welcomed and addressed |

| 6 | …receive coordinated, complementary assistance | Humanitarian response is coordinated and complementary |

| 7 | … can expect delivery of improved assistance | Humanitarian actors continuously learn and improve |

| 8 | …receive the assistance they require from competent and well-managed staff and volunteers | Staff are supported to do their job effectively, and are treated fairly and equitably |

| 9 | …can expect that the organisations assisting them are managing resources effectively, efficiently and ethically | Resources are managed and used responsibly for their intended purpose |

Refer to the generic CHS Guidance Note and Indicators and CHS self-assessment tool for further details and guidance relating to the monitoring of each Commitment.

In CARE performance of a response against these quality criteria is measured through ongoing monitoring (see M&E chapter), Rapid Accountability Reviews (RAR) and CHS verification processes, as described in the HAF Accountability System section below. Please also refer to the CHS Scorecard for a snapshot of CARE’s performance against these

3.1.3 CHS Verification

CARE is committed not only to upholding and promoting high standards of quality and accountability in its humanitarian programming, but also to demonstrating its own best practice. CARE is required to follow the four-stage CHS Verification Scheme, managed by the CHS Alliance – self-assessment, peer review, independent verification and certification – which allows it to measure and evidence its compliance with CHS Commitments at varied levels of rigour and objectivity.

The CHS self-assessment tool guides organisations through the collection of evidence including structured feedback from staff, partners and people affected by crisism generated from the monitoring and evaluation of humanitarian actions as well as from the review of documented organisational policies and procedures. the tool includes a scorecard whereby top-line scores against each of the nine Commitments as well as scores for diversity and for every Performance Indicator, Key Action and Organisational Responsibility that fall under each Commitment are generated.

In CARE’s case and for the sake of efficiency and complementarity, CHS verification processes will benefit from existing process and tools:

- CHS Key Actions: Routine monitoring and evaluation and particularly Rapid Accountability Reviews (RAR) which take place during each CARE emergency response and are centred around the nine CHS Commitments will generate the core of the required data and documentation.

- CHS Organisational Responsibilities: Lessons and recommendations from After Action Reviews and response evaluations will ensure that CHS verification processes are rooted in the real, current experience across emergencies and across the CARE confederation.

For further details about the link between CARE’s accountability processes and CHS assessments refer to section 6 (HAF Accountability System).

Under the leadership of the CARE Emergency Group (CEG), CARE will complete its first CHS self-assessment by June 2017 and will then set out a timeline and process for achieving full CHS certification.

The Humanitarian Performance Targets included in CARE’s Humanitarian and Accountability Framework (HAF) are described by CARE’s Vision 2030 including the specific priorities and critical outcomes for CARE’s humanitarian impact area. They are thus related to CARE’s strategic performance as a humanitarian organisation undergoing a finite period of evolution and bound to specific aspirations.

The Performance Indicators are cluster in Performance Areas which relate to different components of the Global Programme strategy as well as the CHS. Each Performance Area includes its own set of indicators and targets against which CARE is able to measure its performance during each emergency response, across the organisation and over time.

| PERFORMANCE TARGETS FOR CARE’s HUMANITARIAN RESPONSES | |

| Performance Area A: Scale and Scope | The scale of CARE’s response(s) in terms of the numbers of people affected by humanitarian crisis is defined as:

10% of people affected by major crises receive quality, gender-responsive humanitarian assistance and protection which is locally-led. The technical scope of CARE’s humanitarian response(s) remains focused on four core sectors (WASH, Shelter, Food & Nutrition Security, and Sexual and Reproductive Health and Rights). |

| Performance Target B: Relevance | This performance area refers to the appropriateness (as also expressed by the crisis affected people) of CARE’s response with regards to:

|

| Performance Area C: the CARE Approach | The indicators and targets in this performance are refer to the global indicators related to the three elements of the CARE Approach:

|

| Performance Area D: Efficiency | This Performance area includes indicators and targets related to the strength of CARE in playing its humanitarian role

a) effectively in terms of emergency preparedness and surge capacity b) efficiently in terms of coherence, leadership and decision-making, fundraising and fund management c) collaboratively through coordination, partnerships and localisation as well as information sharing and advocacy Reference: CARE’s Humanitarian & Emergency Strategy 2013-2020 are CARE International Protocols |

| Performance Area E: Alignment with Global Standards | This set of performance indicators are adopted from the CHS and the targets adapted to CARE specific aspirations, policies and protocols. |

More specific targets that can be agreed for a particular response by the appropriate decision making body such as the Crisis Coordination Group (CCG) especially if diversions from the generic targets are likely and appropriate in the response context (for more detail about response specific decision making see Emergency Management Protocols in Chapter xx)

Information on the Performance Targets generated through CARE’s internal reporting mechanisms can be synthesized and analysed with the help of the Response Performance Summary (RPS) tool. RPS are prepared usually at least to process information collected during a Rapid Accountability Review (RAR) and/or to inform After Action Reviews (AAR) but also to support continuous response performance management.

Aggregated data from CARE’s responses provide the Humanitarian Performance Metrics which contribute to the analysis required for assessing CARE’s institutional strength. The HAF Accountability System establishes the linkages between the monitoring and review mechanisms allowing for documenting CARE’s organizational performance as a humanitarian organization.

3.2.1 Scale and Scope

The CARE Program Strategy indicates that CARE is committed to the provision of quality, gender-responsive humanitarian assistance and protection which is locally-led.

The scale of this goal is set to a target of at least 10% of the people in need of humanitarian assistance (OCHA defined) to be participating in CARE’s humanitarian programmes in line with the aforementioned characteristics. Based on various forecasts for trends of natural disasters and human-made crisis we estimate that most likely this translates into at least 50 million people affected by major crisis who, with the support of CARE and its partners, will receive quality, gender-responsive humanitarian assistance and protection which is locally-led

Two global indicators allow CARE to monitor this this goal and targets:

- 19. # and % people satisfied with safety, adequacy, inclusiveness, and accountability of humanitarian assistance and/or protection services provided by CARE and partners.

- 20. # and % people (as % of People in Need where applicable) who obtained (directly/indirectly) humanitarian support and/or protection services provided by/with support from CARE and partners in line with global standards of lifesaving & quality assistance.

Obviously, each response will have its own specific targets based on the operational environment and on the focus on people with particular vulnerability profiles. It is important that each response team defines and justifies the precise scale of the planned interventions in a response strategy.

Furthermore, every humanitarian response by CARE is expected to reflect CARE’s focus on four core sectors – WASH, Shelter, Food & Nutrition Security and Sexual, Maternal & Reproductive Health in emergencies (links to the relevant sections in CET). For each type of intervention, the response strategy should set out specific targets and time frames for individual monitoring and reporting within CARE as well as in the humanitarian system. However in principle CARE aims to develop and implement humanitarian responses which:

- are largely focused on (not more than) 1-2 core sectors

- show clear complementarity between interventions of different sectors

3.2.2 Relevance of the Response

CHS commitments 1 and 2 relates to the Appropriateness, Impartiality, Effectiveness and Timeliness of the response as:

- expressed in the relevant Humanitarian Principles

- defined by the CHS performance indicators for commitments 1 and 2

- laid out by CARE protocols and SOPs

The related Performance Indicators require therefore the documentation of internal processes and decisions as well as the monitoring of the satisfaction of the affected people with CARE’s humanitarian interventions.

3.2.3 Alignment with the CARE Approach

In order to align with the Global Programme Strategy CARE teams are expected to develop response strategies and implement humanitarian responses that are compliant with criteria established by the CARE Approach Markers

- Gender Marker (minimum score: 2)

- Inclusive Governance Marker (minimum score: 1)

- and Resilience Marker (minimum score: 1) : support building back stronger approaches for recovery and link to recognised resilience strategies

3.2.4 Efficiency

In its Humanitarian and Emergency Strategy (HES) CARE states its ambition to be a leading humanitarian agency known for its particular ability to reach and empower women and girls in emergencies as well as:

- its culture of humanitarian leadership and accountability at all levels

- the efficiency of its operational models which expand and nurture strategic partnerships with traditional and non-traditional actors at the local, national, regional and global levels

- the talent, capabilities and capacity of its staff in terms of global preparedness, surge and response management capacity

- its sustainable business model which ensures adequate funding and effective use of resources for humanitarian preparedness and response

The HES sets out organisation-wide strategic priorities for achieving the institutional and operational strengths required to establishing CARE as a leading humanitarian agency. The following table provides an overview of core performance criteria linked to each strategic priority.

| Strategic Priority | Institutional Strength | Operational Strength |

| Leadership & Authority | · efficient global and regional decision making mechanisms for all types of crisis & responses · Efficient corporate response mobilisation mechanisms

· corporate accountability mechanisms |

· clear and transparent operational decision making authority

· efficient response monitoring, & reporting · timely response reviews and management responses |

| Operating Model | · global partnership approach with investment in strategic alliances

· corporate preparedness and risk management mechanisms · Efficient remote management systems |

· efficient response preparedness

· efficient operational cooperation and collaboration · effective integration and support of local actors and responders |

| Capacity & Capabilities | · Globally funded and managed surge, flexible response and support, risk analysis & management capacities

· Coordinated staff training, talent management, human resource support mechanisms |

· adequate response management capacity based on response specific assessments, enhanced through deployments and complemented through appropriate partnerships |

| Business Model | · Global communication and information management systems

· Pooled rapid funding mechanisms · Program support capacity at corporate level · Geographically focused preparedness and response management platforms |

· timely public communications

· efficient fundraising and information management · adequate response funding (pooled and response strategy based) · efficient management of response specific and reallocated resources |

3.2.5 Management against Global Standards

content to be developed

All humanitarian programme monitoring and evaluation is part of CARE’s commitment to accountability and connected to CARE’s HAF Accountability System. The HAF Accountability System is designed to monitor how well CARE performs against both its Humanitarian Quality and Accountability Commitments and its Humanitarian Performance Targets within each emergency response. Furthermore, the system allows individual response performances to be compared with one another, across the globe and over time.

CARE’s Programme Information and Impact Reporting System (PIIRS) and the CHS verification process will support the synthesis and analysis of CARE’s organization wide performance against HAF targets and commitments which will be summarized and presented in the annual Humanitarian Performance Metrics reports.

Key HAF related reporting outputs include:

AT RESPONSE LEVEL:

- project and programme monitoring reports (incl. mandatory sitreps / humanitarian updates)

- Rapid Accountability Reviews (RAR)

- After Action Reviews (AAR)

- Response Performance Summaries (RPS)

- Reports on Accountability Matrixes (for Type 4 responses)

- project, programme and thematic evaluation report

AT GLOBAL LEVEL:

- Crisis Overviews

- Humanitarian Performance Metrics reports (annual synthesis of RPS findings and scores)

- CHS alignment reports and rolling scorecard (bi-annual, public)

- Reach data and outcome information included in CARE’s global Project and Program Information and Impact Reporting System (PIIRS)

Monitoring, RAR and AAR outputs as well as the RPS are shared internally within CARE through the Crisis Coordination Group and ERWG in order to allow for immediate management response action. Core data from these sources are also stored in CARE’s database for humanitarian crisis and responses. The database is currently under construction and will ultimately allow the visualization of real time performance data for all stakeholders in CARE for enhanced management efficiency, transparency and mutual accountability.

Humanitarian Performance Metrics reports are compiled each year for the CARE International Senior Leadership Team (Humanitarian & Operations). All Performance Metrics reports, evaluation reports and CHS verification outputs are, for the sake of accountability, shared via CARE’s International’s Electronic Evaluation Library (EEL). Synthesized results of the CHS verifications will be made public together with the related improvement plans as required by the statutes of the CHS Alliance.

For fuller guidance on all monitoring and evaluation, please refer to the section below on Accountability Monitoring (incl. Rapid Accountability Reviews – RAR). For AAR reports, Performance Metrics reports and other useful documents, please refer to the Annexes in this chapter.

3.3.1 Accountability monitoring

The term ‘accountability monitoring’ is used to mean the monitoring of our performance on accountability as described by the thrid pillar of the CARE Humanitarian Accountability Framework (HAF). In Chapter 32 of the CET you’ll find a more detailed description of CARE’s Quality and Accountability commitments for humanitarian programming.

Accountability monitoring can help CARE to:

- Check that the accountability systems that have been set up are working effectively.

- Focus our monitoring on approach, processes, relationships and behaviours, quality of work, satisfaction as well as outputs and activities.

- Priortise listening to the views of disaster affected people to assess our impact and identify improvements.

- Provide a feedback opportunity for staff, communities and other key stakeholders to comment on our response and how we are complying with our standards and benchmarks.

Accountability monitoring contributes to CARE’s overall monitoring and evaluation activities. Aspects can be integrated into other project monitoring tools, or carried out as a specific activity e.g. a beneficiary satisfaction survey or FGD (Focus Group Discussion) to solicit feedback and complaints from specific groups amongst the crisis affected population, with specific vulnerabilities or in isolated communities as part of a formal complaints mechanism. Ideally, accountability mechanisms and the monitoring of their effectiveness should be built into project proposals from the outset.

Accountability data (including complaints data) needs to be incorporated into monitoring reporting, alongside monitoring of project progress.

Rapid Accountability Review (RAR)

The Rapid Accountability Review (RAR) is the central tool for accountability monitoring in CARE’s humanitarian programmes.

What is a Rapid Accountability Review?

A RAR is a rapid performance assessment of emergency response against CARE’s HAF that takes place within the first few months of an emergency response. It generates findings and recommendations that are used to make immediate adjustments to the response. It is also a key source for any response review and performance management process. It usually entails interviews with CARE management, staff, communities and other key external stakeholders, and is led by an independent team leader.

What is the purpose of a RAR?

The overall goal of the RAR is to improve the quality of CARE’s response by assessing its compliance with established good accountability practice. More specifically, the RAR:

- Provides a real time assessment of HAF compliance early during a humanitarian response

- Ensures that the views of our key stakeholders are taken into account in making adjustments to our response and in drawing lessons learned.

- Identifies good practices, highlight gaps (including gaps in capacity) gaps and areas for improvement

- Makes recommendations to CARE management (CO, CI and CARE Members) for immediate action related to the ongoing response

When does it take place?

- Ideally, a RAR is conducted within 2 months of the start of an emergency event, and feeds into the general response review and performance management process

- A similar process can also be repeated at later stages of response in order to take stock of HAF compliance and improvements made, or to feed into a particular event such as a response evaluation, an emergency strategy review, or EPP event

How to conduct a RAR?

Detailed information about how to conduct a RAR can be found in the Annex 9.6 RAR-Guidance.

In summary a Rapid Accountability Review should ideally include:

- A self-assessment by CARE staff and partners against relevant indicators (see Annex 9.7: staff engagement)

- Focus group discussions with affected populations (see annexes 9.8a, 9.8b, 9.9,)

- key informant interviews

- an synthesis and analysis meeting / workshop to review results of the above and prepare lessons and recommendations for the ongoing response and for an After Action Review (AAR – see section 8. Learning and Evaluation activities) below

Other examples for accountability monitoring can be found at Annex 9.9a Sample of accountability monitoring tools, including:

- Checklists.

- Simple questionnaires.

- Focus group discussion tools.

- Staff review tools.

- Monitoring tool to help research into local communities’ views.

The RAR Summary (annex 9.6a) provides a tool that facilitates the synthesis of information collected during the accountability monitoring in an organised way against the 9 commitments of the HAF. It also identifies which key performance criteria are relevant at what stage of the response or for what level of accountability review (light, basic, comprehensive).

Depending on the methodology and format of the RAR there can be different reporting formats. Here are few examples:

3.3.2 Learning and Evaluation Activities

Checklist

- Organise an After Action Review.

- Conduct an evaluation when required.

CARE’s Policy on Evaluations is available at Annex 9.1. This policy highlights CARE’s commitment to learning from humanitarian response with a view to improve our practices and policies for future responses. All CARE COs are required to comply with this learning policy. Support and advice can be provided by CEG for learning activities.

8.1 Organising an After Action Review

CARE’s policy requires COs to hold an AAR for each large-scale (Type 2 and 4) humanitarian crisis. Country Offices responding to smaller (Type 1) crisis are also encouraged to conduct a brief ‘lessons learned’ exercise following the response.

What is an After Action Review (AAR)?

An AAR is an internal performance review and lessons learned exercise that takes place within the first 3-4 months of a crisis response. It usually takes a workshop format and brings together key staff who have been involved in the response from the CO, CARE Lead and other parts of CARE. It is independently facilitated (i.e. external to the response) and takes into account external and internal feedback collected before the workshop. An AAR draws both positive and negative lessons, and leads to recommendations to CARE management for improving humanitarian response policy and practice.

What is the purpose of an AAR?

The overall goal of the AAR is to contribute to CARE’s understanding of its crisis response performance and to help to promote learning and accountability throughout CARE International. More specifically, the AAR:

- Provides a space for staff to capture key learning at a critical juncture of a humanitarian crisis response

- Generates lessons learned that can be shared across CI

- Makes recommendations to CARE management (CO, CI and CARE Members) for improving humanitarian response policy and practice

The following annexes can assist with organising an After Action Review:

Annex 9.10 Practical guidance for organising an AAR

Annex 9.10a Terms of Reference for AAR facilitator

Annex 9.10b Sample AAR agenda

Annex 9.10c Sample AAR report

8.2 Commissioning and managing an evaluation

An evaluation can be defined as ‘…a systematic and impartial examination of humanitarian action intended to draw lessons to improve policy and practice and practice and enhance accountability‘ (ALNAP, 2001). CARE’s current policy states that external evaluations are optional but will usually be carried out in cases where at least one of the following conditions has been met:

- involves a large-scale commitment of resources

- has strategic implications for CARE

- has piloted innovative approaches that could become standard good practice in future emergency responses.

An evaluation should be led by an external, independent facilitator. The terms of reference for the evaluation should describe how the results will be used.

As with the AAR, the CO has the primary responsibility to identify funding, and organise and manage the evaluation. An external evaluation led by a professional evaluator can typically costs USD20,000-35,000. In some circumstances, a joint evaluation with partner agencies may be more appropriate. While an evaluation (with the exception of real-time evaluations) usually don’t take place until several months after the emergency event, there are a few planning and budgeting steps that need to be taken during the early stages of an emergency response. For more details see the following annexes.

Annex 9.14 Sample TOR for an evaluation

Annex 9.15 Sample format for an evaluation report

Annex 9.16 ALNAP’s evaluation quality proforma